Despite the fact that it’s 2012 and we’re more than a decade into the digital revolution of high definition (and beyond) moving images, there is still a very narrow bottleneck that keeps digital shooters from getting everything they want. That bottleneck is data. The sheer amount of information that needs to be recorded from today’s high-definition and cinema-definition cameras is staggering, and we don’t—yet—have the technology to do so.

In order to understand how data flows and the problems it creates, we need to understand the basics of digital information, so we’ll start with the very building blocks.

Bits and Bytes In the world of binary communication, one bit can define two states of being: on/off, zero/one, black/white. Whatever the information may be, one bit can describe two states, generally polar opposites.

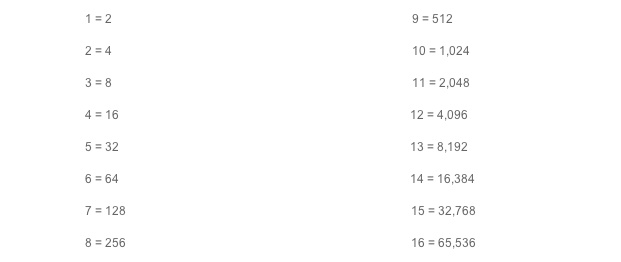

If one bit can define two states, then two bits can define four states; makes sense, right? Except that we continue to increase exponentially with the addition of each bit. Three bits equals eight states (2 x 2 x 2 = 8), four bits equals 16 states (2 x 2 x 2 x 2 = 16) and so forth, like this:

Note that in a binary system, working on a factor of two—not the 10 of the metric system—numbers that we’re used to seeing as clean, round numbers, aren’t. In fact, our normal modifiers like kilo (K), meaning 1,000, or mega (M), meaning 1,000,000, aren’t round numbers either. One kilobit (Kb) is actually 1,024 bits (b), not 1,000. One megabit (Mb) is 1,024,000 bits, not 1,000,000. That often causes confusion. When you buy a hard drive, the drive is classified in a base-10 system, so that one terabyte (TB) is one trillion (1,000,000,000,000) bytes; however, since data is measured on a binary scale, you can actually only fit 931.32 gigabytes (GB) on that drive (999,997,235,528 bytes).

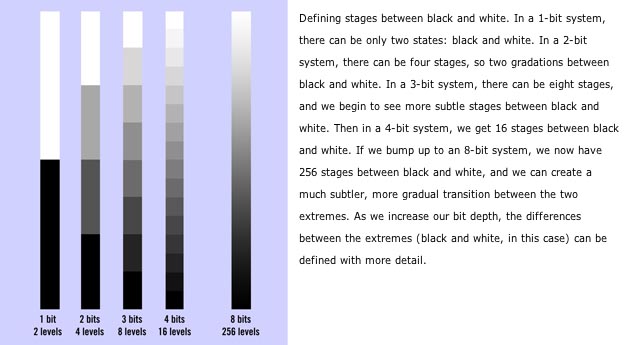

More Bits Is Better Relatively speaking, the more bits we have to define any particular piece of information, the more accurately that information can be defined. If we’re trying to create a grayscale that defines gradations between black and white, the more bits we have, the more subtly and accurately we can represent that scale.

If we’re looking at an 8-bit RGB delivery system, as most exhibition/display formats are, we find that we have eight bits of red, eight bits of green and eight bits of blue information. Since each eight bits represents 256 individual states or levels, 8-bit RGB can reproduce 16,777,216 different colors (256 x 256 x 256). This color level is, arguably, the highest the human eye is capable of differentiating. Although when we work with digital images, it’s nice to have more room so we can pick which eight bits of information we use, which is why many camera systems are 10-, 12- or even 16-bit.

The number of colors increases exponentially with higher bit rates. In a 10-bit system, 1,073,741,824 colors are available (1024 x 1024 x 1024). In a 16-bit system, you have 281,474,976,710,656 colors (65,536 x 65,536 x 65,536)—now that’s a big crayon box. Actually, the colors available in a 16-bit system are well beyond the human range of vision; most of them will be discarded when we reach an exhibition format—be that a motion picture theater screen via Digital Cinema Projection, a home television, a computer screen or even a mobile phone. Having 16 bits of information at the capture stages gives us more flexibility, especially in terms of dynamic range, to choose our final eight bits (or 12 bits in some rare cases, such as digital cinema projectors) later on.

If we look at what a full 10-bit RGB HD signal should be, we’ll see there’s a great deal of information to be recorded. How much? Well, it starts with the pixel count. To get the total pixels, multiply the horizontal count by the vertical count. Multiply that number by 10 for the number of bits of information per pixel, and then multiply that by three, because we’re dealing with a tri-stimulus system that has three colors: red, green and blue.

Once we arrive at the total number of bits, we’ll reduce that number to a more manageable size by converting to kilobits (Kb)—by dividing the total by 1,024 (the binary version of 1,000). We’ll further reduce the number by converting to megabits, again dividing by 1,024. This gives us our total in megabits (Mb), which we can further break down into megabytes by dividing that total by eight (eight bits to a byte (B)).

1920 x 1080 = 2,073,600 pixels x10 bits per pixel = 20,736,000 bits x3 (R,G,B) = 62,208,000 bits

Divide by 1024 = 60,750 Kb (kilobits), divided again by 1024 = 59.33 Mb (megabits), divided again by 8 = 7.42 MB (megabytes)

1920 x 1080 10-bit RGB video is 7.42 MB (59.33 Mb) per frame. x 23.976 = 177.9 MB (1,423.22 Mb) per second

That’s an extraordinary amount of information.

The Bottleneck: Record Media To put that number in context, let’s look at the highest possible data rates for some common types of videotape. (Remember that stuff we used to record images onto?)

Mini DV/HDV = 3.125 MB/s (25 Mb/s)

DVC = 6.25 MB/s (50 Mb/s)

DVCPRO (HD) = 12.5 MB/s (100 Mb/s)

HDCAM = 17.5 MB/s (140 Mb/s)

HDCAM-SR = 55 MB/s (440 Mb/s)

Looking at that cart of tape types, we see that even the videotape with the highest data rate, HDCAM-SR, can record only 440 Mb/s, which is less than one-third the required data rate for full 10-bit HD video.

This creates a significant problem. Our best and fastest (and, of course, largest and most expensive) common videotape format can’t record all of the information from a full HD signal. So how do we do it?

We compress the information from the camera in order to reduce the data rate and fit it onto the medium. This, ladies and gentlemen, is where that ugly compression comes in. In order to fit the pretty images we want into the limitations of the media we have, we have to compress it (discard information) to reduce the overall data rate. Some formats, such as HDV or AVCCAM, have considerable compression applied to get HD images onto media that records at 3.125 MB/s (25 Mb/s). Some media, such as DVCPRO HD or HDCAM, have a much higher data rate, so less compression is required.

This is a primary reason for the move to tapeless acquisition. Solid-state media has a much higher data rate than videotape.

However, we’re chasing our own shadows a bit as we’re getting cameras with higher and higher pixel counts. With larger pixel counts on higher-resolution cameras like the RED EPIC, you’re looking at even higher data rates. The EPIC has an image area of 5120 x 2700 and is a 16-bit system (although it’s not a tri-stimulus camera; it has a Bayer pattern color array on a single sensor), so the EPIC has a data rate of 221,184,000 bits per frame.

5120 x 2700 = 13,824,000 pixels?x16 bits per pixel = 221,184,000 bits

/1024 = 216,000 Kb (kilobits)?/1024 = 210.94 Mb (megabits)?/8 = 26.37 MB (megabytes)

5K 16-bit is 26.37 MB (210.94 Mb) per frame.?x23.976 = 632.18 MB (5,057.44 Mb) per second

With data rates of this magnitude, videotape is not an option. Neither are standard connections to external hard drives:

FireWire 400: 49.15 MB/s (393.22 Mb/s)?USB 2.0: 60 MB/s (480 Mb/s)?FireWire 800: 98.3 MB/s (786.43 Mb/s)

This limitation leads manufacturers to find ways to get the media closer to the information bus in the camera, namely, today’s solid-state media drives like P2, SxS and REDMAG drives.

With 4K and HD data rates in mind, it’s readily apparent how much compression is applied in formats like HDV, with an HD record data rate of 25 Mb/s, or even XDCAM, with 35 or 50 Mb/s. Panasonic’s DVCPRO HD and AVC-Intra formats are both 100 Mb/s, which allows for less compression of the same HD signal. It should go without saying that formats with the least compression are more viable as far as image integrity and are much easier to manipulate in postproduction without severe degradation of the image.

Let’s look back at the RED EPIC’s data rate (before compression): 632.18 MB (5,057.44 Mb) per second. That’s at 24 (23.976) frames per second. What about when the EPIC shoots 60 fps? That’s a staggering 1,582 MB (12,657 Mb) per second of data. Even with RED’s lowest compression of 3:1, that’s still 264 MB (2,110 Mb) per second of data. That should give you some idea how fast the data transfer rates of the REDMAGs are.

As cameras continue to increase bit depth and pixel counts, our requirement for faster record media increases in urgency. There is no media currently that can record the immense amount of data coming off of a camera like the RED EPIC at 60 frames per second. Perhaps in a couple of years there will be, but by then people will be demanding 120 frames per second from the EPIC’s new 6K dragon sensor—without compression. Someday that may be possible. Today, however, it’s still a dream.